2023 - 07 - 26 | Press Releases

Fueling Creativity in the Digital World

FOR IMMEDIATE RELEASE

26 July 2023

Media Contact:

Marketing & Media Office

media@siggraph.org

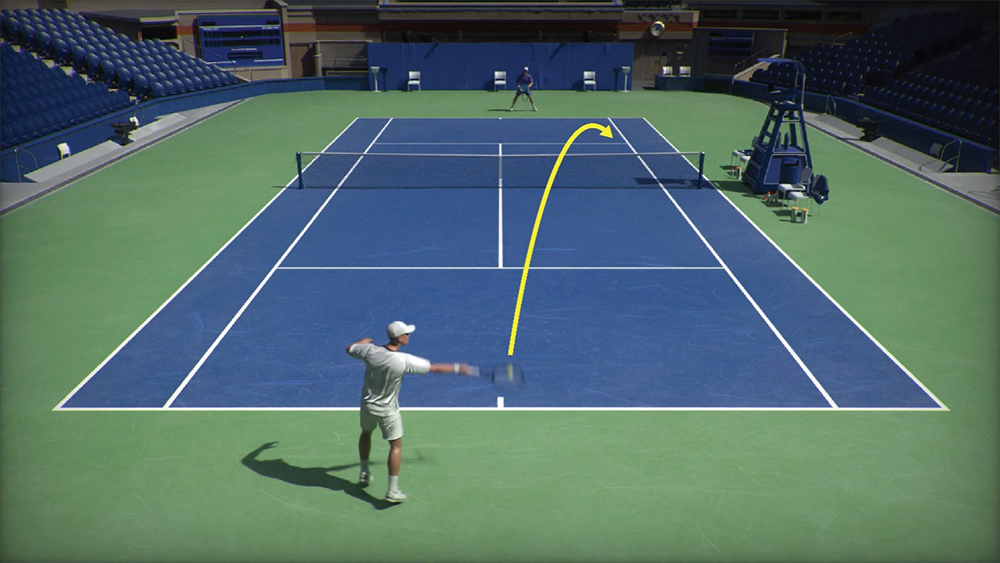

“Learning Physically Simulated Tennis Skills From Broadcast Videos” © 2023 Zhang, Yuan, Makoviychuk, Guo, Fidler, Peng, Fatahalian

Fueling Creativity in the Digital World

SIGGRAPH 2023 Technical Papers research showcases innovation in further advancing the field of character animation.

CHICAGO—Since its inception 50 years ago, SIGGRAPH has served as an epicenter for inventive ideas and innovative research in the ever-evolving field of computer graphics and interactive techniques. These innovations have given us realistic CGI, or computer-generated images, that helped blast traditional filmmaking and gaming into a new era.

This year, computer scientists, artists, developers, and industry experts around the world will convene 6–10 August in Los Angeles for SIGGRAPH 2023. Fittingly, the theme behind SIGGRAPH this year, notes Conference Chair Erik Brunvand, is to recognize 2023 as the “Age of SIGGRAPH,” honoring the full chronology of the industry, the community, and the organization — then, now, and far into the future.

One example of CGI innovation is the ongoing advances in character animation. Many of these advances are driven by motion capture technology that consistently delivers efficient, state-of-the-art visual effects prevalent in our beloved superhero blockbusters.

The creative potential in the field of character animation research remains endless.

“Character animation represents a truly unique field within computer graphics. The goal of character animation is to replicate the intelligence and behavior of living beings, and this extends not only to humans and animals, but also to imaginary creatures,” says Libin Liu, assistant professor at Peking University who will be presenting new research, along with his team, as part of the SIGGRAPH 2023 Technical Papers program.

“Over the years, the research community has explored many approaches toward achieving this goal … and with the exciting progress we’ve seen in AI, there will be a boom in research that utilizes large language models or more comprehensive multi-modal models, as the ‘brain’ for the character, coupled with the development of new motion representation and generation frameworks to translate the ‘thoughts’ of this brain into realistic actions,” says Liu. “It’s an exciting time for all of us in this field.”

As a preview of the popular Technical Papers program, here is a spotlight of three unique approaches that showcase innovation in advancing the character animation field even further.

Body Language

Many of us unconsciously converse or express ourselves using physical gestures. Some of us may gesture with our hands, shift our body posture to make a point, or put into action another body part (eyes or legs) while we talk. Indeed, speech and communication go hand-in-hand with physical gesturing — a complicated sequence to represent digitally.

A team of researchers from Peking University-China and National Key Lab of General AI have introduced a sophisticated computational framework that captures the detailed nuances of physical human speech gestures. And this framework does so while allowing users to control those details using a broad range of input data, including a piece of text description, a short clip of demonstration, or even data representing animal gestures such as video of a bird flapping or spreading its wings.

The key component underpinning the team’s new system is a novel representation of motions, specifically the quantized latent motion embeddings, coupled with diffusion models — one of the key components behind recent AI-driven image generation techniques. This representation significantly reduces ambiguity and ensures the naturalness and diversity of movements. Additionally, they enhanced the CLIP model developed by OpenAI with the ability to interpret style descriptions in multiple forms and have developed an efficient technique to analyze sentences, enabling the digital character to understand the speech’s semantics and determine the optimal time to gesture.

Building on the advances made in the digital human space, this new work addresses the challenge of producing digital characters that have the capability to perform physical gestures during conversations, and with minimal direction or instruction. The system supports style prompts in the form of short texts, motion sequences, or video clips and provides body part-specific style control; for instance, combining the gesture of a yoga pose (warrior one) with gestures of feeling “happy” or “sad.”

“With this work, we’ve moved another step closer to making digital humans behave like their real-life counterparts. Our system equips these virtual characters with the capability to perform natural and diversified gestures during conversations, thereby considerably enhancing the realism and immersion of interactions,” says Liu, a lead author of the research and assistant professor at Peking University’s School of Intelligence Science and Technology.

“Perhaps the most exciting aspect of this technology is its ability to let users intuitively control the character’s motion using language and demonstrations. This also allows the system to interface seamlessly with advanced artificial intelligence like ChatGPT, bringing an increased level of intelligence and lifelikeness to our digital characters.”

Liu and his collaborators, Tenglong Ao and Zeyi Zhang, both at Peking University, are set to demonstrate their new work at SIGGRAPH 2023. View the team’s paper and accompanying video on their project page.

Realistic Robots in Motion

Who doesn’t love a dancing robot? But how to easily replicate or simulate legged robots and their dynamic motions remains a challenge in the field of character animation. In new research, an international team of researchers from Disney Research Imagineering and ETH Zürich describe an innovative technique that enables the optimal retargeting of expressive physical motions onto freely walking robots.

Retargeting motion, or editing existing motions, either from motion capture data or other sources of digital artistic creations, is a quicker way to simulate physical motion in the digital world. Accounting for the significant differences in proportions, mass distributions, and number of degrees of freedom of the motion data makes editing them onto a different system most challenging.

To that end, this new technique enables the retargeting of captured or artist-provided motion onto legged robots of vastly different proportions and mass distributions.

“We can take an input motion, and then automatically solve for the best possible way that a robot can execute that motion,” note the researchers. Their method takes into account the robot dynamics and also the robot’s actuation limits, which means that even highly dynamic motions can be successfully retargeted. The result is that the robot can perform the motion without losing its balance — not an easy feat.

The latter is a major hurdle the team has overcome with this new approach. Due to the significant differences in sizes, shapes between animals, or artist-created rigs and a legged robot, the retargeting of motions is difficult to achieve with standard optimal control techniques and manual trial-and-error methods.

The researchers’ approach is a differentiable optimal control (DOC) technique that allows them to solve for a comprehensive set of parameters to make the retargeting agnostic to changes in proportions, mass distributions, as well as differences in the number of degrees of freedom between the source of input motion and the actual physical robot.

The team behind DOC includes Ruben Grandia, Espen Knoop, Christian Schumacher, and Moritz Bächer at Disney Research and Farbod Farshidian and Marco Hutter at ETH Zürich. They will showcase their work as part of SIGGRAPH 2023 Technical Papers program. For the paper and video, visit the team’s project page.

Tennis, Anyone?

The ultimate dream of computer gaming enthusiasts is to be able to control their players in the virtual world in a way that mirrors the players’ athleticism and movement in the physical world. The authenticity of the game is what counts.

A global team of computer scientists, one of whom also is a tennis expert and NCAA tennis champion, has developed a physics-based animation system that can produce diverse and complex tennis-playing skills while only using motion data from videos. The team’s computational framework empowers two simulated characters to engage in extensive tennis rallies, guided by controllers learned from match videos featuring different players.

“Our work demonstrates the exciting possibility of using abundant sports videos from the internet to create virtual characters that can be controlled. It opens up a future where anyone can bring virtual characters to life using their own videos,” says Haotian Zhang, first author of the new research and a Ph.D. student studying under Kayvon Fatahalian, associate professor of computer science at Stanford University. Zhang and Fatahalian are set to present their work at SIGGRAPH 2023, along with collaborators from NVIDIA, University of Toronto, Vector Institute, and Simon Fraser University.

“Imagine the incredible creativity and convenience as people from all backgrounds can now take charge of animation and make their ideas come alive,” adds Zhang. “The potential is limitless, and the ability to animate virtual characters is now within easy reach for everyone.”

The researchers demonstrate the system via a number of examples, including replicating different player styles, such as a right-handed player using two-handed backhand and vice versa, and capturing diverse tennis skills such as the serve, forehand topspin, and backhand slice, to name a few. The novel system can produce two physically simulated characters playing extended tennis rallies with simulated racket and ball-handling dynamics.

Along with Zhang and Fatahalian, collaborators Ye Yuan, Viktor Makoviychuk, and Yunrong Guo at NVIDIA, Sanja Fidler at University of Toronto, and Xue Bin Peng at NVIDIA and Simon Fraser University, will demonstrate their work at SIGGRAPH 2023. For the full paper and video, visit the team’s page.

Each year, the SIGGRAPH Technical Papers program spans research areas from animation, simulation, and imaging to geometry, modeling, human-computer interaction, fabrication, robotics, and more. Get a glimpse of this year’s content by watching the SIGGRAPH 2023 Technical Papers trailer, and view the full program for even more detail. Visit the SIGGRAPH 2023 website to register.

###

About ACM, ACM SIGGRAPH, and SIGGRAPH 2023

ACM, the Association for Computing Machinery, is the world’s largest educational and scientific computing society, uniting educators, researchers, and professionals to inspire dialogue, share resources, and address the field’s challenges. ACM SIGGRAPH is a special interest group within ACM that serves as an interdisciplinary community for members in research, technology, and applications in computer graphics and interactive techniques. The SIGGRAPH conference is the world’s leading annual interdisciplinary educational experience showcasing the latest in computer graphics and interactive techniques. SIGGRAPH 2023, the 50th annual conference hosted by ACM SIGGRAPH, will take place live 6–10 August at the Los Angeles Convention Center, along with a virtual access option.